Ian here—

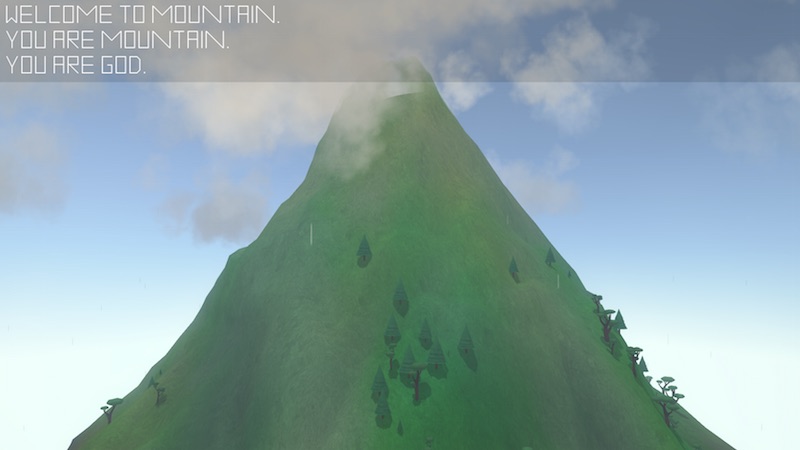

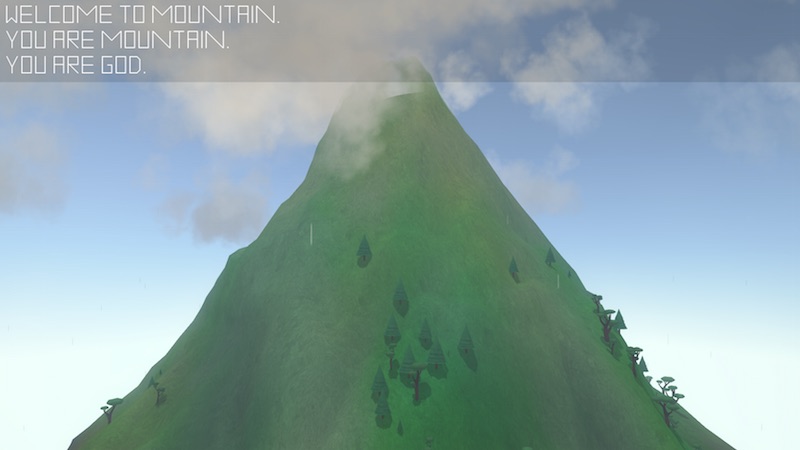

2017 marks the year of animator David OReilly’s return to to the medium of videogames, following up on his strange and serene digital-art-toy-screensaver-thing Mountain (2014). His new game, Everything, released on PS4 on March 21st, and releases on Windows, Mac and Linux this Friday.

The game’s title, Everything, is also the game’s premise: It is a game about everything. Specifically, it is a game in which players can be everything, switching at will from trees to koalas to rocks to quarks and back. I haven’t had a chance to sit down with it yet—I suspect I’ll make time for it once it’s out for PC—but I did want to take the advent of its multi-platform release as an opportunity to muse on this premise’s history in gaming.

Everything may be the first game that explicitly promises to allow us to be everything, but games have previously offered the ability for us to step into the role of quite a lot of things, including a surprising range of inanimate objects. “The child plays at being not only a shopkeeper or teacher,” wrote Walter Benjamin, “but also a windmill and a train.”[i] Games have proved to be a continuing outlet for this childhood animist fantasy—why, in just a couple weeks’ time, we’re going to be able to play as a coffee mug!

Join me, won’t you, in a breezy tour of some of the stranger things games have let us be.

Continue reading →